They install the same Python modules you have listed in your requirements file and run your project without any problems. Second, it makes it easy to share your project with others. It is used to install all of the dependencies on another computer so that they are compatible with one another.

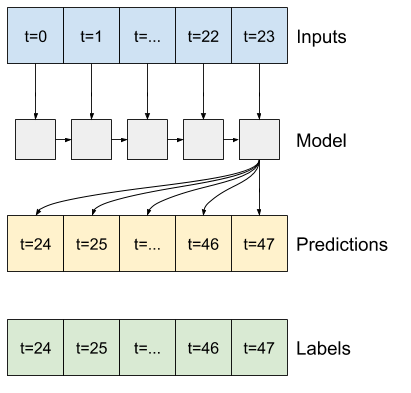

It simplifies the installation of all of the required modules on any computer without having to search through online documentation or Python package archives. Using a Python requirements file comes with a lot of benefits.įirst, it allows you to keep track of the Python modules and packages used by your project. It makes your life easier and increases your productivity. This way you can create Multiple LSTM Models that have different Time Series Generators for different stocks.Before we go into the details on how to create a Python requirements file, make sure to check our list of Python IDEs and code editors here if you are serious about learning Python. This would output a list of models appended and easily referenced using the index. Model.fit(time_series_gen, steps_per_epoch= 1, epochs = 5) Model.add(LSTM(32, input_shape = (n_input, n_features))) # lstm_models.append(create_model()) : You could create everything using functions lstm_models = įor time_series_gen in stock_timegenerators: You can also use this approach in dealing with multiple models efficiently. [,Īlso having Multiple Keras Timeseries means that you're training Multiple LSTM Models for each stock. The output of this will be an appended TimeSeriesGenerator that you can use by iterating the list or reference by index. Stock_timegenerators.append(TimeseriesGenerator(x, y, length = 4, sampling_rate = 1, batch_size = 1)) # sequence = TimeseriesGenerator(x, y, length = 4, sampling_rate = 1, batch_size = 1) You can append the created TimeSeriesGenerators into a Python List. npy files to batch data to the model, I'm not entirely sure how to approach this yet though.įor the scenario, you want to merge each of those sequences into a bigger one that contains the data for all the stocks and will be used for training. npy files and then using a generator to load a random sample of those. Instead I'm exploring the possibility of preprocessing all data for each stock seperately, saving as. The issue I'm facing is similar, I simply can't hold everything in memory: Creating a TimeseriesGenerator with multiple inputs. If you have enough memory you could manually create the sequences and hold the entire dataset in memory. stock, you want one model trained on the entire dataset. If I understand your question It's not as if you want to train one model pr. I think the answer from is slightly missing the point. batch() methods from Tensorflows Dataset type. This means that the generator will feed a single unique sequence from a random stock at each "call", enabling me to use the.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed